Summary

The introduction of AI memory layers promises to revolutionize AI workflows by retaining context.

Innovative Developments in AI

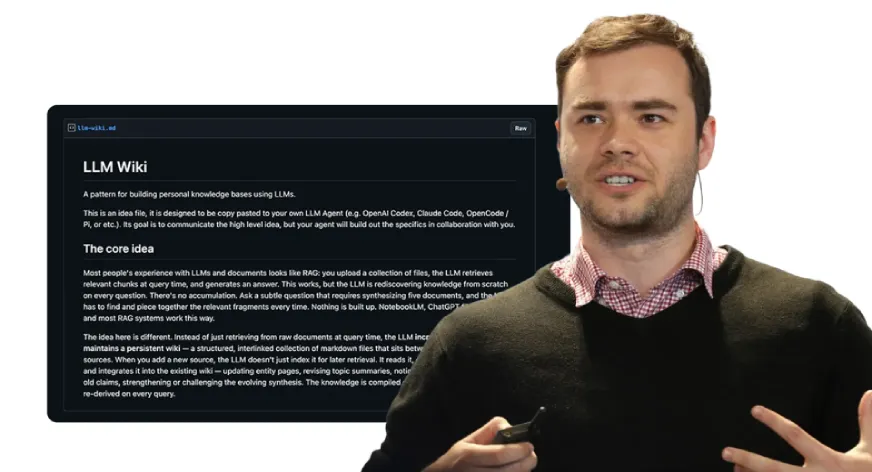

Andrej Karpathy has demonstrated how AI memory layers can work through his LLM Wiki and the new tool Graphify. This technology enables previous interactions to be remembered, meaning the model does not have to reprocess all data every time. This is particularly relevant for applications involving large codebases or extensive research collections.

Importance for the BI Market

This development aligns with the broader trend of AI integration in business intelligence, where knowledge retention is critical. Competitors like OpenAI and Google are also developing more advanced LLMs, but adding memory layers could represent a significant leap forward. These innovations enhance the efficiency and focus of BI projects, which have direct implications for how companies make data-driven decisions.

Concrete Action Points for BI Professionals

BI professionals should prepare for the implementation of AI tools with memory layers in their workflows. It is essential to explore how this technology allows for process optimization and the effective utilization of past insights.

Deepen your knowledge

ChatGPT and BI — How AI is transforming data analysis

Discover how ChatGPT and generative AI are changing business intelligence. From generating SQL and DAX to automating dat...

Knowledge BaseAI in Power BI — Copilot, Smart Narratives and more

Discover all AI features in Power BI: from Copilot and Smart Narratives to anomaly detection and Q&A. Complete overview ...

Knowledge BasePredictive Analytics — What can it do for your business?

Discover what predictive analytics is, how it works, and how to apply it in your business. From the 4 levels of analytic...